What is Data Management?

Data management is the practice of collecting, storing, organizing, maintaining, and utilizing data effectively and securely across an organization. It involves a set of processes, policies, and technologies designed to ensure data accuracy, availability, and accessibility while protecting data integrity and security. Effective data management enables organizations to derive valuable insights from their data, support informed decision-making, ensure regulatory compliance, and enhance overall operational efficiency. Data management is essential for maximizing the potential of data as a strategic asset in today's data-driven world.

But why is data management so important today? The answer: Digitalization. Modern manufacturing and logistics environments, for example, are becoming more complex and generate enormous volumes of data. Part of this digital revolution is the increasing adoption of IoT technologies and devices in Industry 4.0. In the context of the Industrial Internet of Things (IIoT) and Industry 4.0, effective IoT data management is crucial for leveraging the full potential of connected devices and smart systems. On-premise data management systems are being replaced by cloud platforms that handle real-time data processing.

What Are the Key Components of Data Management?

The success of a company’s data asset management system depends on its data architecture. A data architecture refers to the comprehensive framework that defines how data is collected, stored, integrated, and managed within a company. It also defines the basic data environment for advanced analytics and business intelligence (BI). Key components of a data architecture includes data models (conceptual, logical, and physical), data storage solutions, data integration processes, metadata management, data governance, data security, and data quality management. The following section explains the main components of a data architecture and the data management process.

Data Capture, Integration, and Processing

The first stage of the data management lifecycle involves data capturing, data collection, and data processing. Raw data is captured and collected from data sources. IoT devices, web Application Programming Interfaces (APIs), mobile apps, surveys, and forms are some examples of data sources. In the next step, automated data processing occurs. Data integration techniques such as Extract, Transform, Load (ETL) and Extract, Load, Transform (ELT) are typically used for this.

ETL is the standard method for integrating and organizing data across different datasets. On the other hand, ELT is becoming more popular due to the increasing use of cloud data platforms which require real-time data. During data processing, data is either filtered, merged, or aggregated, depending on the application and purpose. Processed data can be fed into business intelligent platforms, or into machine learning algorithms for predictive analytics, for example.

Data Storage

Depending on the type and purpose of the data, data storage can take place either before, or after data processing. When it comes to the storage of data, data storage systems like data warehouses and data lakes are used.

A data lake is a centralized repository designed to store large volumes of raw, unstructured (e.g. audio files or images), semi-structured (e.g. webpages or XML files), and structured data (e.g. Excel sheets or database tables) from various sources. It is highly scalable and allows for storing data in its native format until it is needed for analysis. This means that data lakes are used to store data before data processing for analytics (ELT). Examples of data likes include Amazon S3, Microsoft Azure Data Lake Storage, Google Cloud Storage, and Apache Hadoop.

A data warehouse is a centralized repository designed to store structured data with pre-defined schemas from various sources. It is optimized for querying and analysis, making it an integral part of business intelligence and reporting processes. Data warehousing is used after data has been processed (ETL).

Data Governance

Data governance is a comprehensive framework that encompasses the establishment of policies, procedures, and standards for data collection, storage, access, and sharing. Data government teams assign specific roles such as data stewards to maintain data quality and integrity. This way, access to data is restricted to those that are authorized, which is crucial in maintaining data privacy. The data governance framework facilitates data integration, enhances accuracy, and ensures compliance with regulations. Robust security while measures are implemented to protect against unauthorized access and breaches. In summary, data governance maximizes the value of data assets by prioritizing data quality, data security, and data privacy.

Data Security

Data security refers to the practices and technologies implemented to protect data from unauthorized access, breaches, corruption, or theft. It involves a range of measures and authentication solutions, including encryption, access controls, authentication protocols, and regular security audits, to ensure that sensitive information remains confidential, intact, and accessible only to authorized users. Data security strategies are used to detect and respond to security incidents. These strategies also ensure compliance with legal and regulatory requirements for data protection.

What is Engineering Data Management (EDM)?

Engineering Data Management (EDM) is one kind of data management framework. It refers to the systematic approach to managing data generated and used throughout the lifecycle of a product or project. This includes the collection, storage, organization, integration, analysis, and maintenance of engineering data related to design, development, production, and maintenance activities. These processes ensure data quality, accessibility, security, and usability.

Engineering data refers to all the information generated, used, and maintained throughout the lifecycle of engineering projects and products. This includes design data like Computer Aided Drawing (CAD) models and technical specifications, simulation and analysis data such as Finite Element Analysis (FEA) and Computational Fluid Dynamics (CFD) models, maintenance and operational data, IoT data, regulatory compliance documentation, and production data, for example.

EDM is important for companies in industries where complex engineering data needs to be efficiently managed. This includes aerospace and defense, and is also part of digitalization in the automotive industry, digitalization in industry and manufacturing, as well as digitalization in construction, for example. The goal: To ensure data integrity and accuracy, improve stakeholder collaboration, facilitate regulatory compliance, and to support engineers, designers, and stakeholders in decision-making. EDM is essential to ensure the success of complex engineering projects.

Data Management and OPC UA

IIoT involves the integration of various systems and devices. This necessitates a comprehensive approach to data management to ensure interoperability and data consistency across different platforms and technologies. The Open Platform Communications Unified Architecture (OPC UA) plays an important role here. So what is OPC good for?

OPC UA is integral to modern data management in industrial settings by providing standardized, secure, and scalable communication protocols. By providing a common language for data exchange, OPC UA simplifies the integration of data from multiple sources into a unified data management system. This ensures that data from different devices and systems can be aggregated, processed, and analyzed consistently.

Real-time data is crucial for monitoring operations, predictive maintenance, and immediate decision-making. OPC UA enables the timely collection and transfer of real-time data, ensuring that data management systems have access to the most current information for analysis and action.

Security is another critical aspect of data management. OPC UA consists of two layers: The communication layer and the application layer. These layers each have built-in security mechanisms and features. The communication later uses encryption, digital certificates, and signatures to establish a secure channel between server and client. Users are authenticated and verified in the application layer. OPC UA’s security features help secure data distribution and ensure that data integrity and confidentiality are maintained. This is is essential for protecting sensitive industrial data and complying with regulatory requirements.

Scalability is important for data management systems to handle growing volumes of data and expanding industrial networks. OPC UA is both flexible and scalable. This ensures that data management systems can evolve with the increasing complexity and size of industrial operations.

Wireless IoT Technologies and Data Management

Products in a Data Management Systems

A comprehensive data management system involves a variety of hardware and software products to effectively handle data collection, storage, processing, integration, and analysis.

Hardware

Data acquisition and collection devices like IoT sensors are required to capture raw data. High-performance servers are crucial for data management systems. These servers handle the management of databases, processing tasks, and storage operations, ensuring reliable performance and up-time for critical applications.

Storage Area Networks (SAN) and Network Attached Storage (NAS) systems provide scalable data storage solutions. SAN offers high-speed network storage for large-scale environments, while NAS provides file storage solutions that are ideal for smaller-scale data sharing and backup needs.

Solid State Drives (SSDs) and Hard Disk Drives (HDDs) offer various storage options. SSDs provide faster data access and greater durability. HDDs offer larger storage capacities at a lower cost, suitable for different data storage needs.

Routers, switches, and wireless access points are required to create the network infrastructure that supports data transmission and connectivity. These devices ensure efficient and secure communication between data management components.

Software

Software for data management can function as standalone tools or be integrated into a unified platform. The main kinds data management software and tools are listed below.

Database Management Systems (DBMS) provide robust platforms for storing and managing structured and unstructured data, ensuring data integrity and accessibility. Data exchange software is used to transfer large amounts of information securely and efficiently between different systems and users. Master Data Management (MDM) tools are software applications designed to maintain a master data source across an organization.

For data processing, ETL and ELT tools are required. They extract, transform, and load data from various sources into data warehouses and data lakes. Data analytics and business intelligence (BI) tools enable data visualization, reporting, and analysis. Data science and machine learning platforms offer tools and environments for developing and deploying data science and machine learning models. These solutions are used for advanced analytics and predictive modeling.

Data warehouses and data lakes are platforms required for data storage. Cloud storage and computing platforms also provide scalable and flexible solutions for data storage, processing, and management. These platforms offer various services and tools for handling large-scale data workloads in the cloud.

Data governance and metadata management tools are needed to provide frameworks to define policies, roles, and responsibilities related to data management. Data quality tools profile, cleanse, and enrich data. These tools help maintain high data quality, ensuring that data is accurate, complete, and reliable.

Data security software provides robust measures for protecting data from unauthorized access, breaches, and other security threats. These solutions help ensure data privacy and compliance with regulations. Backup and recovery tools ensure that data is regularly backed up and can be quickly restored in case of data loss or corruption.

Facts & Figures

Data management is a topic that is rapidly gaining significance. According to a report by the market research platform “Gitnux”, 95 percent of CEOs that were surveyed claim that data management and analysis are crucial for business growth. 73 percent of surveyed companies are investing in big data to stay competitive. More than 80 percent of surveyed corporate management leaders claim that mastering data management will be very important in the next five years. The market for data governance tools is expected to grow by 22 percent between 2020 and 2028. By 2025, 67 percent of all data management tasks will be automated via machine learning and AI.

Successful Examples of Data Management

As IoT devices are increasingly being used across all industries, there has been an exponential growth in data generation by sensors and embedded systems. These devices continuously produce a diverse array of data, including performance metrics, environmental conditions, and operational statuses. Proper data management systems provides the necessary infrastructure to collect, store, and organize this massive influx of data efficiently. The role of OPC UA is becoming increasingly important to ensure seamless data exchange and interoperability.

Below are examples from the real-world that demonstrate how OPC UA has contributed to data management in different industries, including the aerospace industry, production, and in the digitalization of the energy industry.

Data Management at Airbus Defense and Space

In order to optimize data management and communication in its ‘Technological Experiments in Zero Gravity' (TEXUS) project, Airbus Defense and Space has been using OPC UA from the OPC Foundation since 2017. The TEXUS OPC UA support specification controls all sensors, actuators, and controllers. OPC UA enables centralized control and data conversion through adapters for database integration. Communication data is transmitted in real-time. This results in enhanced data management efficiency across various platforms.

“We use OPC UA in the onboard system, i.e. in the flight software. We use it there to capture data, and to control the experiment module itself. We also use OPC UA in the ground segment, where all displays speak OPC UA. This way, development engineers can access the data.”

Enrico Noack

Engineer

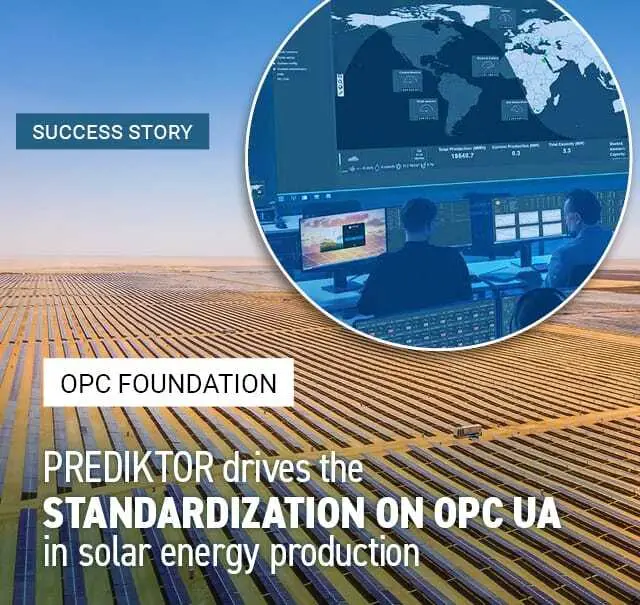

Data Management at Scatec

Energy solutions provider Scatec is using the OPC-UA-based solution “PowerView” from the OPC Foundation for data management in solar fields. Data generated is generated from different types of equipment from various suppliers in each solar field. To combine all asset data, a MAP gateway system from systems integrator Prediktor is used. More than 100,000 data points are received per second. PowerView captures all generated data from all assets. The data is standardized via OPC UA and aggregated into a single ‘plant’ structure.

Energy solutions provider Scatec is using the OPC-UA-based solution “PowerView” from the OPC Foundation for data management in solar fields. Data generated is generated from different types of equipment from various suppliers in each solar field. To combine all asset data, a MAP gateway system from systems integrator Prediktor is used. More than 100,000 data points are received per second. PowerView captures all generated data from all assets. The data is standardized via OPC UA and aggregated into a single ‘plant’ structure.

“Essentially, it's about gaining insight into production and taking data-driven actions to optimize operations. A common challenge when working with all these different sectors is getting a handle on all the data points from the process, ensuring that they are accurate, that they are of good quality, and that they are structured in a meaningful way, so that they can be used effectively. That in turn could be the key to the concept of this data harmonization and the OPC UA standard. This technology has been extremely important for digitalization in the industry, because without access to operational data, you can do very little in terms of optimization, because you need the truth that lies in the data you collect.”

Thomas Pettersen

Vice President Operations Management

Data Management at Groupe Renault

Groupe Renault has digitized production at 17 manufacturing sites with the implementation of the OPC UA standard and companion specifications from the OPC Foundation. Data collected by sensors is transferred to the Google Cloud Platform. The Industrial Data Management (IDM 4.0) platform enabled the collection of data from many sources in 2019. The information is contextualized, structured, and aggregated to become accessible as big data for control and analysis.

More Stories on Data Management

The Future of Data Management

The future of data management is evolving rapidly, driven by advancements in technology, increasing volumes of data, and the growing need for organizations to leverage data for strategic advantage.

Companies are looking for more flexible, scalable, and cost-effective solutions to handle and manage data. Cloud-based data management is becoming more popular in this context. It allows the real-time adaptation of data storage and processing capabilities. On-premise data management systems and centers are being replaced by cloud platforms.

New technologies like data fabrics are emerging. Data fabrics are unified architectures that use smart automated systems to simplify the end-to-end integration of cloud environments and data pipelines. The emergence and development of these technologies will enable companies to gain a more comprehensive overview of business performance. This results in a better understanding of consumer behavior, for example.

Artificial Intelligence (AI) and Machine Learning (ML) are also gaining significance in data management. Companies are using these technologies for the analysis of datasets and the identification of patterns. Additionally, with increasing data volumes, coupled with a shortage of data science specialists, the development of automated data preparation is gaining ground. Software providers are using AI and machine learning to automate data cleansing and preparation.

The Advantages of Data Management

Advantages

- Improved data quality

- Reduced costs

- Increased security

- Enhanced decision-making

- Improved scalability

Companies that implement proper data management systems gain numerous benefits.

Data management breaks down data silos by centralizing data repositories and facilitating collaboration across departments. This allows teams to access and share data more easily. Centralized data management also ensures that all teams are working with the same, up-to-date information, reducing discrepancies and ensuring consistency in reporting and analysis.

Risks associated with data security, compliance, and privacy are mitigated. By implementing robust data governance policies and security measures, organizations can safeguard sensitive information and ensure compliance with regulations.

By centralizing and analyzing customer data in real-time, organizations can identify trends, anticipate needs, and proactively address issues, providing a quick and responsive customer experience. Data management enables organizations to adapt quickly to changing market conditions and emerging trends. By leveraging real-time data insights, businesses can respond promptly to customer needs, competitive pressures, and market dynamics.

Effective data management systems are designed to scale with the growing needs of the company. This enables the flexible expansion of data storage, processing capabilities, and user access as the business evolves.

Companies that implement proper data management systems gain numerous benefits.

Data management breaks down data silos by centralizing data repositories and facilitating collaboration across departments. This allows teams to access and share data more easily. Centralized data management also ensures that all teams are working with the same, up-to-date information, reducing discrepancies and ensuring consistency in reporting and analysis.

Risks associated with data security, compliance, and privacy are mitigated. By implementing robust data governance policies and security measures, organizations can safeguard sensitive information and ensure compliance with regulations.

By centralizing and analyzing customer data in real-time, organizations can identify trends, anticipate needs, and proactively address issues, providing a quick and responsive customer experience. Data management enables organizations to adapt quickly to changing market conditions and emerging trends. By leveraging real-time data insights, businesses can respond promptly to customer needs, competitive pressures, and market dynamics.

Effective data management systems are designed to scale with the growing needs of the company. This enables the flexible expansion of data storage, processing capabilities, and user access as the business evolves.

Advantages

- Improved data quality

- Reduced costs

- Increased security

- Enhanced decision-making

- Improved scalability

The Challenges of Data Management

There are five main challenges that companies must master in order to implement an effective data management system.

Companies must be able to protect sensitive data from cyberattacks and breaches. Data privacy regulations such as the CCPA, GDPR, and the Data Protection & Privacy Act must be adhered to.

It is also extremely important to maintain high data quality. Data that is inconsistent, incomplete, or inaccurate can result in flawed analyses. This in tern leads to poor decision-making.

Integrating data from disparate sources and systems can be hindered by data silos, where information is stored in isolated repositories without easy access or interoperability. Variations in data formats, structures, and standards across different systems make integration complex and may require extensive data transformation and mapping.

Managing and storing large volumes of data efficiently requires scalable storage solutions, robust infrastructure, and optimized data processing algorithms. Ensuring optimal performance in data processing, analysis, and retrieval becomes increasingly challenging as data volumes grow. This requires efficient indexing, caching, and query optimization techniques. Scaling infrastructure to accommodate growing data volumes while balancing cost considerations requires careful planning and optimization to avoid over-provisioning or under-utilization of resources.

Compliance with evolving data regulations and privacy laws presents challenges in terms of understanding and interpreting legal requirements and adapting internal policies and procedures accordingly. Clarifying data ownership and accountability within organizations can be challenging, especially in decentralized environments or when dealing with third-party data sources.

Partners Spezialized in Data Management

Outlook – Next-Level Data Management

Trends in data management have emerged as a result of the shortage in data specialists and experts, increased demand for data security and compliance, and the need for improved data integration. Data management of the future involves hybrid cloud platforms, metadata management, and low-code/no-code platforms.

Hybrid Cloud Platforms

For industries that deal with sensitive data and have strict regulations, such as healthcare and pharma, the energy industry, and the public sector, hybrid cloud platforms are becoming more popular. Hybrid cloud platforms integrate the capabilities of public clouds with the security and control of private clouds. They are becoming the ideal solutions that require customized data storage and management strategies.

Metadata Management

Metadata is data that provides information about other data, such as its content, origin, structure, or usage. As data ecosystems become more complex, it is becoming increasingly important to properly manage metadata. An effective metadata framework supports data scientists and analysts in understanding datasets that are relevant. In machine learning and AI, metadata plays a crucial role in maintaining the traceability and transparency of decisions that are made based on AI.

Low-Code/No-Code Platforms

Low-Code/No-Code platforms are becoming a trend in software development, in the context of data integration, analytics, and accessibility. These platforms enables people with little or no technical knowledge to design and implement data integration workflows. These tools play a crucial role in connecting diverse data sources. They transform data formats and are used to develop data-driven applications. This trend emerged as a response to the current shortage of data scientists and experts.